Transistors, microchips and the digital revolution

The development of transistors made a huge impact on everyone's daily life. So they well-deserve a place in this website because their development was the start of the digital age. Transistors are the basic building blocks of virtually every electronic device in use today. This page gives a very basic introduction to them and goes on to describe their development into the microchip.

By Neil Cryer BSc PhD, former university physics lecturer

What are transistors?

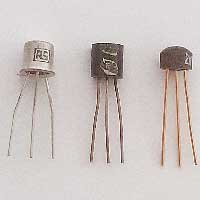

Transistors are a very small and simple electronic devices which can control an electric signal. A typical transistor has three electrical connections. Essentially the current passing between two of the connections can be controlled by a much smaller current entering the third. Mostly they are made of silicon, an element found in common sand, which explains their relative cheapness.

The first transistor

The first transistor was made in 1947 in Bell labs in America. It was called a 'point contact transistor'. The revolutionary potential of the transistor was quickly realised, not only for replacing valves but also in its size and low power requirements meaning that vast numbers need only take a small amount of space. The first prototype transistor radio was demonstrated in 1952 with mass production reaching the market in 1954.

What are microchips

A microchip, also called an integrated circuit, is the name given to a group of miniturised transistors and circuits which have been formed on a single block of semiconducting material such as silicon. The silicon has to be particularly pure and then cut into thin slices called wafers.

A typical microchip

It was quickly realised that a wafer of silicon could have more than one transistor formed on its surface. Indeed minute transistors can be formed on a silicon wafer together with conducting connections resulting in thousands of transistors in the form of a complete working computer on a thin piece of silicon smaller than a finger nail!

Moore's law

In 1965 Gordon Moore who was the co-founder of Intel made an observation that has since become known as 'Moore's law'. It was the observation that the number of transistors packed into a given area of microchip doubles every two years as the price seems to halve in the same timescale.

Impact of transistors on computers

Computers have got dramatically smaller since the development of the transistor. They are all around you and are so common that they have names which describe their use, like your smart phone.

To give you an idea of how large they used to be, in the late 1950s I visited the Royal Radar Research and Development establishment in Malvern where I was allowed to walk around inside a computer, yes 'inside'. It was powered by glass valves in walls of glowing valves stretching from floor to ceiling, all in a wire-netting enclosure. The data was saved on reels of tape. There is no doubt that the minute chip inside the simplest of modern phones is vastly more powerful than this museum piece that I had walked inside.

The digital revolution

With the miniaturisation of computers, you can understand how they are today incorporated into almost every electric device.

The impossibility of grasping the scale of the forthcoming digital revolution

As the digital revolution was huge, it is understandable that no-one could envisage its enormity in its early days. Here are a couple of fairly typical quotes from the time:

"It is very possible that ... one machine would suffice to solve all the problems that are demanded of it from the whole country."

"I think there is a world market for maybe five computers."

Thomas Watson, president of IBM, 1943."

There are a number of similar past predictions from eminent scientists on the internet.

| sources | webmaster | contact |

Text and images are copyright

If you can add anything to this page or provide a photo, please contact me.